2.1 First Steps - Programming with Message Passing¶

The Message Passing Interface (MPI) is an industry standard passing messages between processes in a distributed memory system. MPI is supported by a variety of languages, and is widely used for parallel programming. MPI enables multiple processes to communicate with each other by passing data from one process to another - the data is the message of a message passing system.

MPI programs work on a single multicore machine or on a distributed memory system (or cluster).

MPI allows programmers to divide up a task and distribute part of the computation to each process, which works on its own sub-task simultaneously.

In this chapter, we use the Python library mpi4py to introduce message passing and MPI. The mpi4py library of functions has several collective communication functions that are designed to work with arrays created using the Python library for numerical analysis computations called NumPy. As such, all examples involving lists employ NumPy arrays. Unlike Python lists, NumPy arrays hold only one type of data, and generally are faster and take up less space than Python lists that contain data of only one type. NumPy arrays are specifically designed for fast mathematical computations and can be reshaped to form matrices and other multi-dimensional data structures. A detailed discussion of numpy is beyond the scope of this book; for more about its features and available methods, we recommend consulting the documentation and available tutorials.

The SPMD Pattern¶

One of the foundational patterns in message passing is the single program multiple data (SPMD) pattern, where each process executes the same program, but on different units of data. The following program illustrates the concept of SPMD, where each process contains and produces its own small bit of data (in this case printing something about itself). Here is our first basic example of this, which you can run to see how it works.

Behind the scenes, this code is run by a program called mpirun, which starts this single program running on multiple processes. The box labeled “Flags for mpirun” is used to provide the number of processes to use. The default provided, ‘-np 4’, signifies start the program with 4 processes.

Exercises

Re-run this example a few times. Then run different numbers of processors by changing the 4 in ‘-np 4’ to 8, then 16. What do you notice about the output? This is an important point to realize anout independent processes running in MPI.

Explain what “multiple data” values this “single program” is generating.

Additional Details about this code¶

Let’s look at each line in main() and the variables used:

commThe fundamental notion with this type of computing is a process running independently on the computer. With one single program like this, we can specify that we want to start several processes, each of which can communicate. The mechanism for communication is initialized when the program starts up, and the object that represents the means of using communication between processes is called MPI.COMM_WORLD, which we place in the variable comm.idEvery process can identify itself with a number. We get that number by askingcommfor it using theGet_rank()function.numProcessesIt is helpful to know haw many processes have started up, because this can be specified differently every time you run this type of program. Askingcommfor it is done with theGet_size()function.myHostNameWhen you run this code on a cluster of computers, it is sometimes useful to know which computer is running a certain piece of code. A particular computer is often called a host, which is why we call this variablemyHostName, and get it by askingcommto provide it with theGet_processor_name()function.

These four variables are often used in every MPI program. The first three are often needed for writing correct programs, and the fourth one is often used for debugging and analysis of where certain computations are running.

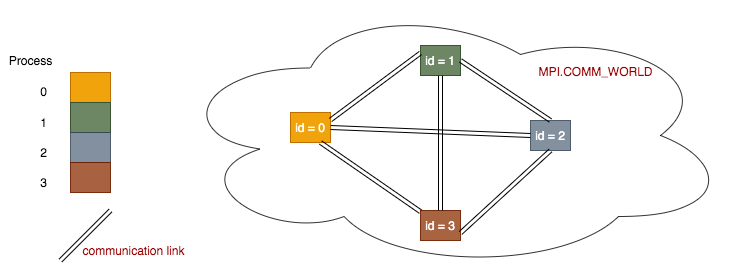

The fundamental idea of message passing programs can be illustrated like this:

Each process is set up within a communication network to be able to communicate with every other process via communication links. Each process is set up to have its own number, or id, which starts at 0.

Note

Each process holds its own copies of the above 4 data variables. So even though there is one single program, it is running multiple times in separate processes, each holding its own data values. This is the reason for the name of the pattern this code represents: single program, multiple data. The print line at the end of main() represents the multiple different data output being produced by each process.

The Conductor-Worker Pattern¶

Let’s start with a very simple example that is a twist on the previous one.

Exercises

Rerun the above code, using varying numbers of processes from 1 through 8.

Explain what stays the same and what changes as the number of processes changes.

This basic code example illustrates what we can do with this pattern: based on the process id, we can have one process carry out something different than the others. This concept is used a lot as a means to coordinate activities.

The conductor-worker is one of the most common patterns used in message passing programs. In more realistic programs, one node (designated the conductor) doles out tasks to a series of worker nodes that complete their assigned tasks and return the results to the conductor. We will see this in later examples where we add message passing.

Note

By convention, the conductor coordinating process is usually the process number 0.